A few weeks ago, I witnessed Google Search make what could have been the most expensive error in its history. In response to a query about cheating in chess, Google’s new AI Overview told me that the young American player Hans Niemann had “admitted to using an engine,” or a chess-playing AI, after defeating Magnus Carlsen in 2022—implying that Niemann had confessed to cheating against the world’s top-ranked player. Suspicion about the American’s play against Carlsen that September indeed sparked controversy, one that reverberated even beyond the world of professional chess, garnering mainstream news coverage and the attention of Elon Musk.

Except, Niemann admitted no such thing. Quite the opposite: He has vigorously defended himself against the allegations, going so far as to file a $100 million defamation lawsuit against Carlsen and several others who had accused him of cheating or punished him for the unproven allegation—Chess.com, for example, had banned Niemann from its website and tournaments. Although a judge dismissed the suit on procedural grounds, Niemann has been cleared of wrongdoing, and Carlsen has agreed to play him again. But the prodigy is still seething: Niemann recently spoke of an “undying and unwavering resolve” to silence his haters, saying, “I’m going to be their biggest nightmare for the rest of their lives.” Could he insist that Google and its AI, too, are on the hook for harming his reputation?

The error turned up when I was searching for an article I had written about the controversy, which Google’s AI cited. In it, I noted that Niemann has admitted to using a chess engine exactly twice, both times when he was much younger, in online games. All Google had to do was paraphrase that. But mangling nuance into libel is precisely the type of mistake we should expect from AI models, which are prone to “hallucination”: inventing sources, misattributing quotes, rewriting the course of events. Google’s AI Overviews have also falsely asserted that chicken is safe to eat at 102 degrees Fahrenheit and that Barack Obama is Muslim. (Google repeated the error about Niemann’s alleged cheating multiple times, and stopped doing so only after I sent Google a request for comment. A spokesperson for the company told me that AI Overviews “sometimes present information in a way that doesn’t provide full context” and that the company works quickly to fix “instances of AI Overviews not meeting our policies.”)

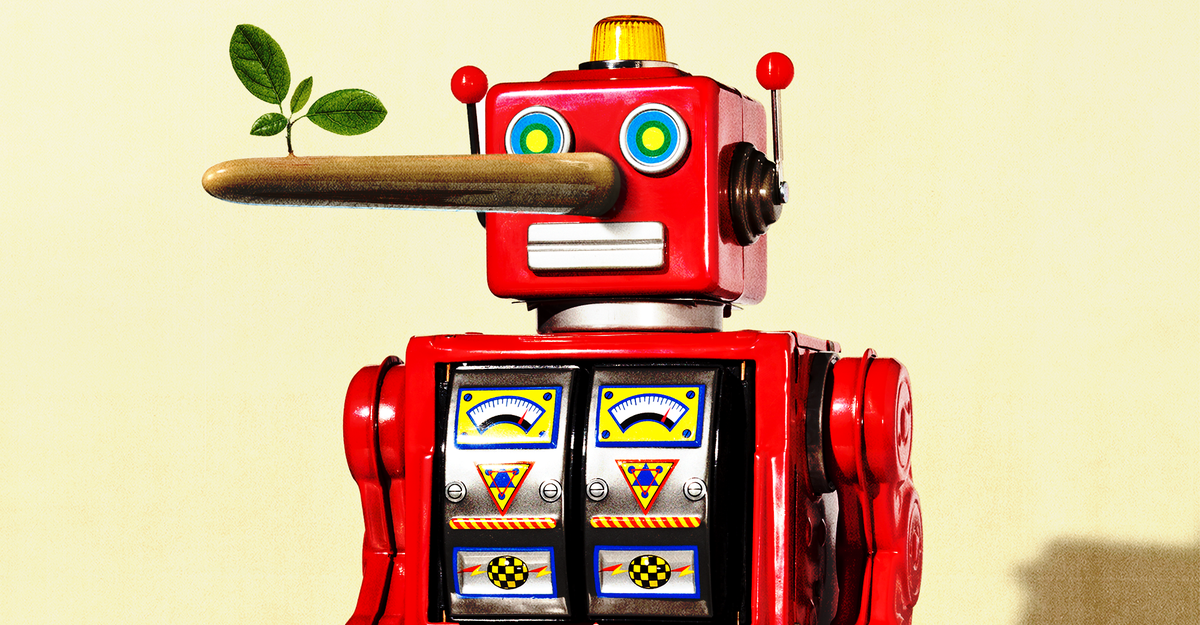

Over the past few months, tech companies with billions of users have begun thrusting generative AI into more and more consumer products, and thus into potentially billions of people’s lives. Chatbot responses are in Google Search, AI is coming to Siri, AI responses are all over Meta’s platforms, and all manner of businesses are lining up to buy access to ChatGPT. In doing so, these corporations seem to be breaking a long-held creed that they are platforms, not publishers. (The Atlantic has a corporate partnership with OpenAI. The editorial division of The Atlantic operates independently from the business division.) A traditional Google Search or social-media feed presents a long list of content produced by third parties, which courts have found the platform is not legally responsible for. Generative AI flips the equation: Google’s AI Overview crawls the web like a traditional search, but then uses a language model to compose the results into an original answer. I didn’t say Niemann cheated against Carlsen; Google did. In doing so, the search engine acted as both a speaker and a platform, or “splatform,” as the legal scholars Margot E. Kaminski and Meg Leta Jones recently put it. It may be only a matter of time before an AI-generated lie about a Taylor Swift affair goes viral, or Google accuses a Wall Street analyst of insider trading. If Swift, Niemann, or anybody else had their life ruined by a chatbot, whom would they sue, and how? At least two such cases are already under way in the United States, and more are likely to follow.

Holding OpenAI, Google, Apple, or any other tech company legally and financially accountable for defamatory AI—that is, for their AI products outputting false statements that damage someone’s reputation—could pose an existential threat to the technology. But nobody has had to do so until now, and some of the established legal standards for suing a person or an organization for written defamation, or libel, “lead you to a set of dead ends when you’re talking about AI systems,” Kaminski, a professor who studies the law and AI at the University of Colorado at Boulder, told me.

To win a defamation claim, someone generally has to show that the accused published false information that damaged their reputation, and prove that the false statement was made with negligence or “actual malice,” depending on the situation. In other words, you have to establish the mental state of the accused. But “even the most sophisticated chatbots lack mental states,” Nina Brown, a communications-law professor at Syracuse University, told me. “They can’t act carelessly. They can’t act recklessly. Arguably, they can’t even know information is false.”

Even as tech companies speak of AI products as though they are actually intelligent, even humanlike or creative, they are fundamentally statistics machines connected to the internet—and flawed ones at that. A corporation and its employees “are not really directly involved with the preparation of that defamatory statement that gives rise to the harm,” Brown said—presumably, nobody at Google is directing the AI to spread false information, much less lies about a specific person or entity. They’ve just built an unreliable product and placed it within a search engine that was once, well, reliable.

One way forward could be to ignore Google altogether: If a human believes that information, that’s their problem. Someone who reads a false, AI-generated statement, doesn’t confirm it, and widely shares that information does bear responsibility and could be sued under current libel standards, Leslie Garfield Tenzer, a professor at the Elisabeth Haub School of Law at Pace University, told me. A journalist who took Google’s AI output and republished it might be liable for defamation, and for good reason if the false information would not have otherwise reached a broad audience. But such an approach may not get at the root of the problem. Indeed, defamation law “potentially protects AI speech more than it would human speech, because it’s really, really hard to apply these questions of intent to an AI system that’s operated or developed by a corporation,” Kaminski said.

Another way to approach harmful AI outputs might be to apply the obvious observation that chatbots are not people, but products manufactured by corporations for general consumption—for which there are plenty of existing legal frameworks, Kaminski noted. Just as a car company can be held responsible for a faulty brake that causes highway accidents, and just as Tesla has been sued for alleged malfunctions of its Autopilot, tech companies might be held responsible for flaws in their chatbots that end up harming users, Eugene Volokh, a First Amendment–law professor at UCLA, told me. If a lawsuit reveals a defect in a chatbot’s training data, algorithm, or safeguards that made it more likely to generate defamatory statements, and that there was a safer alternative, Brown said, a company could be responsible for negligently or recklessly releasing a libel-prone product. Whether a company sufficiently warned users that their chatbot is unreliable could also be at issue.

Consider one current chatbot defamation case, against Microsoft, which follows similar contours to the chess-cheating scenario: Jeffery Battle, a veteran and an aviation consultant, alleges that an AI-powered response in Bing stated that he pleaded guilty to seditious conspiracy against the United States. Bing confused this Battle with Jeffrey Leon Battle, who indeed pleaded guilty to such a crime—a conflation that, the complaint alleges, has damaged the consultant’s business. To win, Battle may have to prove that Microsoft was negligent or reckless about the AI falsehoods—which, Volokh noted, could be easier because Battle claims to have notified Microsoft of the error and that the company didn’t take timely action to fix it. (Microsoft declined to comment on the case.)

The product-liability analogy is not the only way forward. Europe, Kaminski noted, has taken the route of risk mitigation: If tech companies are going to release high-risk AI systems, they have to adequately assess and prevent that risk before doing so. If and how any of these approaches will apply to AI and libel in court, specifically, must be litigated. But there are options. A frequent refrain is that “tech moves too fast for the law,” Kaminski said, and that the law needs to be rewritten for every technological breakthrough. It doesn’t, and for AI libel, “the framework ought to be pretty similar” to existing law, Volokh told me.

ChatGPT and Google Gemini might be new, but the industries rushing to implement them—pharmaceutical and consulting and tech and energy—have long been sued for breaking antitrust, consumer-protection, false-claims, and pretty much any other law. The Federal Trade Commission, for instance, has issued a number of warnings to tech companies about false-advertising and privacy violations regarding AI products. “Your AI copilots are not gods,” an attorney at the agency recently wrote. Indeed, for the foreseeable future, AI will remain more adjective than noun—the term AI is a synecdoche for an artificial-intelligence tool or product. American law, in turn, has been regulating the internet for decades, and corporations for centuries.