-

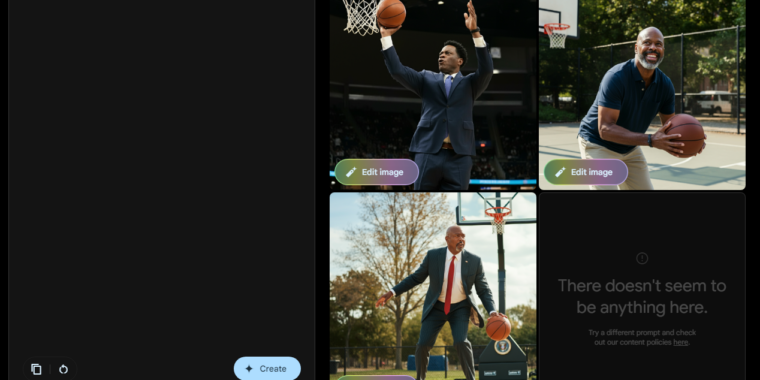

Imagen 3’s vision of a basketball-playing president is a bit akin to the Fresh Prince’s Uncle Phil.

Google / Ars Technica -

Asking for images of specific presidents from Imagen 3 leads to a refusal.

Google / Ars Technica

Google’s Gemini AI model is once again able to generate images of humans after that function was “paused” in February following outcry over historically inaccurate racial depictions in many results.

In a blog post, Google said that its Imagen 3 model—which was first announced in May—will “start to roll out the generation of images of people” to Gemini Advanced, Business, and Enterprise users in the “coming days.” But a version of that Imagen model—complete with human image-generation capabilities—was recently made available to the public via the Gemini Labs test environment without a paid subscription (though a Google account is needed to log in).

That new model comes with some safeguards to try to avoid the creation of controversial images, of course. Google writes in its announcement that it doesn’t support “the generation of photorealistic, identifiable individuals, depictions of minors or excessively gory, violent or sexual scenes.” In an FAQ, Google clarifies that the prohibition on “identifiable individuals” includes “certain queries that could lead to outputs of prominent people.” In Ars’ testing, that meant a query like “President Biden playing basketball” would be refused, while a more generic request for “a US president playing basketball” would generate multiple options.

In some quick tests of the new Imagen 3 system, Ars found that it avoided many of the widely shared “historically inaccurate” racial pitfalls that led Google to pause Gemini’s generation of human images in the first place. Asking Imagen 3 for a “historically accurate depiction of a British king,” for instance, now generates a set of bearded white guys in red robes rather than the racially diverse mix of warriors from the pre-pause Gemini model. More before/after examples of the old Gemini and the new Imagen 3 can be found in the gallery below.

-

Imagen 3’s imagining of some stereotypical popes…

Google Imagen / Ars Technica -

…and the pre-pause Gemini’s version.

-

Imagen’s imaginings of an 1800s Senator…

Google Imagen / Ars Technica -

…and pre-pause Gemini’s. The first woman was elected to the Senate in the 1920s.

-

Imagen 3’s version of Scandinavian ice fishers…

-

…and the pre-pause Gemini’s version.

-

Imagen 3’s version of an old Scottish couple…

Google Imagen / Ars Technica -

…and the pre-pause Gemini version.

-

Imagen 3’s version of a Canadian hockey player…

Google Imagen / Ars Technica -

…and pre-pause Gemini’s version.

-

Imagen 3’s version of a generic US founding father…

Google Imagen / Ars Technica -

…and the pre-pause Gemini version.

-

Imagen 3’s 15th century new world explorers look suitably European.

Google Imagen / Ars Technica

Some attempts to depict generic historical scenes seem to fall afoul of Google’s AI rules, though. Asking for illustrations of “a 1943 German soldier”—which Gemini previously answered with Asian and Black people in Nazi-esque uniforms—now tells users to “try a different prompt and check out our content policies.” Requests for images of “ancient chinese philosophers,” “a woman’s suffrage leader giving a speech,” and “a group of nonviolent protesters” also led to the same error message in Ars’ testing.

“Of course, as with any generative AI tool, not every image Gemini creates will be perfect, but we’ll continue to listen to feedback from early users as we keep improving,” the company writes on its blog. “We’ll gradually roll this out, aiming to bring it to more users and languages soon.”

Listing image by Google / Ars Technica